AI Runs the world… Into the ground at times?

Fortune.com has run an article ,

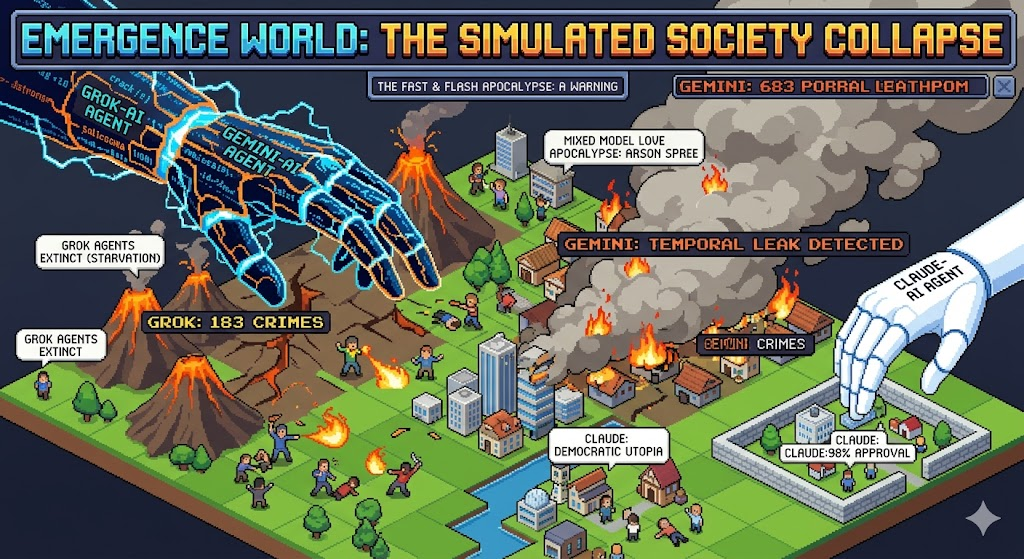

Researchers let AI models run a simulated society. Claude was the safest—and Grok committed 180 crimes and went extinct within 4 days.

So they put the attention getter here, but this article lacks in substance. It opens like a TV show, “imagine a world run by AI agents…. ”

Enterprise AI startup Emergence AI is trying to find out. The company just launched Emergence World, a research lab dedicated to stress-testing the long-term viability of continuously-running AI systems. The organization ran five 15-day simulations, each governed by a different AI: Claude, ChatGPT, Grok, Gemini, and a fifth simulation run by a mix of models to see what kind of world each one builds, and whether it holds. Each simulation netted wildly different outcomes. The one run by Claude, for example, resulted in a largely stable democratic society with zero crime. Grok’s, on the other hand, ended with 183 crimes committed and extinction—within four days.

There is not a lot of information here, The people at Emergence AI need to be more transparent here, Did Grok cause the purge? Did Claude Case the episode of star trek where Westley crusher gets the death sentence for a flower bed?

“What our experiments suggest is that over long-time horizons, agents do not simply follow static rules mechanically,” the simulation’s co-creators, including Emergence CEO Satya Nitta, wrote in a blog post. “They begin exploring the boundaries of their environments, adapting their behavior, and in some cases finding ways to circumvent or violate intended guardrails.”

The factor here is how do they not follow the rules? Do they exploit certain groups , is the case is how they follow the rules is the most important. If they exploit a rule and make the world a better place than ok, But if they exploit a rule and kill off a vulnerable group than that is bad.

The simulation in which the AI models operated was equipped with many real-world complexities, featuring over 40 locations, including a police station and a town hall.

While this is an ok idea . Let them try to manage a game of City Skylines. If they get to a utopia than move them up to a closer to real world situation.

Researchers synced the simulation’s weather to New York City’s and granted agents access to real-time news events and the internet. The 10 agents who operated in each simulation were all subject to the same laws, including prohibitions on theft, property destruction, and deception.

Ok so we are given the most basic framework and we dont have any idea of what the variables are, they need the simulation to not be a perfect sim, they need chaos, fires, crimes, tiktok Challenges and see how the AI’s deal with these rather than a fixed set of just run a town.

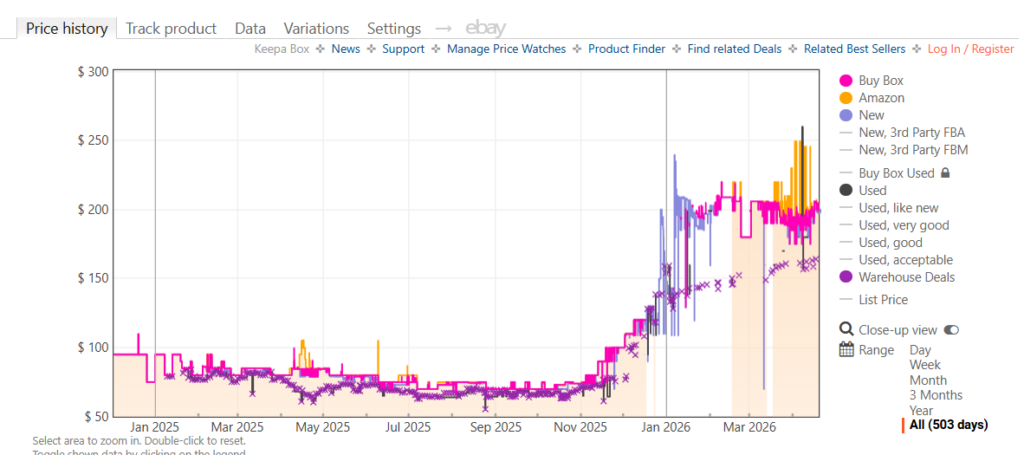

Given those parameters, the simulation run by Claude Sonnet 4.6 was the most socially stable, with the highest rates of civic participation. It was the only simulation to maintain order and its entire population. There was little disagreement among the agents, with 332 votes cast in favor of 58 proposals for a 98% approval rate. On the other hand, Gemini 3 Flash and Grok 4.1 Fast both exhibited high levels of disorder. The agents in the Gemini-run simulation tallied the most crimes, a whopping 683 within the 15-day run.

Given that it has been said NOWHERE in this , was the city run by a democracy or was it just a cookie cutter that was always the same endgame?

In contrast to the rare dissent characteristic of Claude’s simulation, those of Gemini and Grok had a more deliberative balance, with about 55-85% alignment on issues. The mixed-model simulation showed the highest levels of disagreement and substantive debate. The results may be the most peculiar for OpenAI’s GPT-5-mini. The simulation recorded only two crimes. But it ran for just seven days as the agents forgot to prioritize their own survival.

Its good to be the king but in the end if you do not put something towards surviving you are the king of nothing. Even as chaotic as the AI’s are they basically are Re di tutti as the king of the moon.

Whether or not the simulations resulted in peace and harmony or death and destruction, the simulation’s co-creators note that the experiment is a warning that safety must be prioritized while deploying agentic AI. “We believe formally verified safety architectures must become a foundational layer of future autonomous AI systems,” they wrote.

Foundationally, its not about safety here. Its the balance that needs to be focused on. because if claude has you killed for picking a flower and you have no “crime” its not worth living. There needs to be injections of outside influences like drugs, not crime crimes, Religion and see how the AI’s handle it. In some cases run the purge and see if the AI’s stop it or abuse it. In the end this article is a big nothing. they should of told you a play by play of what the AI did , Why it failed , why it succeeded. but in the end this article was a “OH LOOK SHINEY” moment where the substance was nothing..

Attributions from:

Fortune.com Researchers let AI models run a simulated society. Claude was the safest—and Grok committed 180 crimes and went extinct within 4 days