Logbook: amazon

(Frankenshoes Followup) Salomon X ULTRA PIONEER MID with Carhartt Insoles

Shoes are an occasional topic here and I have had a few posts with shoes but this one has to be the strangest follow-up. I had Solomon X ULTRA PIONEER MID shoes and the insoles wore out fast which sucks but, as someone who doesn’t drive I expect these things. My normal go to shoes are Merrell or Salomon, Merrell are the go to war boots that survive just about everything, Solomon are the light weight shoes you do the million mile march in and they keep going.

But sometimes insoles do wear out , and they make life horrible. They are the inner base of the shoe that makes your day horrible or they make the many steps on your feet not feel like you are playing the drums with your feet. The insole was wandering around the shoe making pain in my feet. I deal with a lot of pain in my feet on the day to day as i am prone to muscle spasms .

Salomon – with their lightweight shoes, they somehow do cut the divide of weight and utility perfectly. They do not fall apart , they last for years. After buying these in early winter I wore these day to day as the traveling shoe. they manage to stay warm in winter and do not leak .. But I did notice the insole starting to shed its lining. A week ago went on a quest to replace the insole with preferably the insole that belongs with the shoes. Merrell does it I figured a higher end Mid would do the same.. No. I looked for an hour and kept falling back to the Carhartt Insite Technology Footbed CMI9000 Shoe Accessory. After Two days they shipped in and I was initially worried they would not fit.

They fit well into the shoe, they had a raised arch to comfort the arch, i put them on and thought this might cause pain and instead i decided to press on and see how it went. After a day the arch relaxed into the Mid , The insole more or less perfectly formed into place. while it adds a bit of weight the comfort level definitely went up.

Mentally… when you do something like this, it feels like using a Toyota part on a ford, Which to the average person would seem like a mortal sin. But the secret here is what the industry tries to make you not pay attention to, That parts can be used on other manufacturer parts within reason.

So when you do the math, I am taking an insole meant for people who do construction all day where weights and tolerances need to be at the top, than place it into a shoe that balances high impact and survivability . Conventionally i just took the best of both worlds where repeated day to day use has a base that is form fitting and comfortable to the point of repeated usage plays out to a higher degree.

While this only a week into this, One could hope this is a force multiplier and a message to a both companies. Carhartt makes boots to survive the Armageddon Salomon makes shoes to climb the armageddon, there is a convergence that both companies are missing here….

So my not so closing thought here is, Sometimes instinct of doing something may not be enough but sometimes taking the gut feeling leap of “this might work” sometimes pays off in kind. So if you need extra miles and extra comfort this frankenshoe experiment shall continue…

From the I told you so files. AI Coffeepots Strikes back.

It would seem my want of a good Non-AI coffeepot has been reinforced yet again. The IoT coffeepot has been caught spying.

A cluster of seemingly unrelated incidents ranging from exposed enterprise AI tools to a breached coffee machine has revealed the daunting reality that modern cyber risk is no longer confined to servers, endpoints or even employees. It now increasingly spans ecosystems, vendors and even the delivery mechanisms for the very tools designed to drive organizational productivity.

The problem with AI is it is veiled in a ton of secrecy that is no good for anyone. Because once the bad agents start figuring it out. We are in deep trouble. The convenience of the AI coffee pot might be nice but it comes with a ton of drawbacks most people don’t account for .

A digital forensics investigator, identified only as TR, was called in when a client suspected a rival had infiltrated their systems after a data breach. Instead of finding malicious software, TR discovered that an internet-enabled espresso machine, equipped with a default password, an outdated operating system, and no firewall, was the source of the leak. Threat actors exploited this device, which was connected to the client’s secure network, to exfiltrate sensitive data. The machine was sending packets internationally every time someone brewed a cup, bypassing all the client’s advanced security measures.

This does present the facts of IoT machines need just as much vetting as computers on a network, if your IT guy doesn’t find every IoT devices on the network he is creating a leak, and the corporate moto of just buy the cheapest thing is normally a recipe for disaster.

Firstly, Keep all of your IoT shit on its own network, If you have a store named BOBcorp, Put all IoT devices on BioT29384 network that is isolated from the main network. Second, You want a network monitor IoT devices are chatty in nature but if your network traffic jumps sniff it out and make sure its sanitized. IoT companies should give a master list of where their devices connect to. That way if your AI coffeepot is connecting to Nigeria you know something is wrong. Either that give google, Apple, Amazon, and other Hub Devices a choice to go through a master server on the devices Hub of choice, That way if all of the corps go through the hub device the IT staff have an easy way to poke at what the IoT stuff is doing.

By having a master hub list of devices if a device starts misbehaving or an attack vector is found. They can deauth the device. It stops companies from just vomiting out “smart” everything devices, That way if they lose there auth they will act fast to restore the trust in the devices.

Another thought here is with security layers is, that most IoT have BLE enabled by default, After pairing there should be a dipswitch to turn off the BLE until its needed for repairing to the network. BLE is notorious for sniffing what is around it .

FirstNet Trusted™ Could really do something to come out on top here. Because of corporate laziness of “just buy the cheapest thing” leads to the problem in the first place. since they are part of AT&T and there network knowhow.

Even passed that Cellphones on the corp networks need to be on their own network, Workers who place IoT or cellphones on the larger corp networks need to be taken off and the employee trained for network safety, it would create a top down security that would even extend to the workers home after. Rather than finding out too late that there beloved AI coffeepot has been stealing secrets for Months.

In the end, You are better off with a Coffeepot with a switch , and if you need it smart. Add a smart plug to it and than you can control it from afar without having so much bloatware you never know what it is connecting to.

Anyways, back to my non smart non AI coffee….

Attributions from:

The Cybersecurity Hit List: From Enterprise AI to Compromised Coffee Machines -pymnts.com

We need to talk about “smart” devices… -Coffeecommander.net

Internet-connected coffee machine reportedly led to data breach -Scworld.com

FirstNet Trusted™ FirstNet Trusted™by AT&T

Googles Internet of things – By Google

Hyperscaler AI Earnings Calls Today .(updated)

Today will be interesting, we will learn how much large corps are going to play the shell game with earnings.

Amazon (AMZN) will report its first quarter earnings alongside rivals Google (GOOG, GOOGL), Meta (META), and Microsoft (MSFT) on Wednesday, with investors looking for more signs that the company’s massive artificial intelligence spending is paying off.

My personal feeling.. No. However this does not stop them from playing the shell game of hiding costs and contracts that have not been put to action yet. These companies account for 650 billion of cape ex spending.

The problem here is the market is betting the farm on a large loss leader. Big Tech knows this and they are trying to engineer there way through this problem by throwing more money and more power at it . AI as it stands right now is about as efficient as a 16 cylinder engine with only one sparkplug working. The problem is with AI being a subsidized land grab at the moment the scale is not fit for its current market. With A Slop being the top thing with AI right now and your average query to AI wasting enough power to light a lightbulb for months. In part why the Sam Altmans and the Bill Gates of the world looking for nuclear power plants to offset these cost to the of thousands of GWh of power.

Right now with power costs soaring, the cost per query is not sustainable, When your average British person can warm there tea 50 times over for a slop query. The problem here is the rate of return on LLM’s is degrading, as LLM’s are looking for more training data they are getting flooded with the very slop they are creating, The people now jailbreaking and hijacking AI’s to act like spongebob squarepants the sexy pirate is filling AI’s systems with irrelevant data to the point its becoming its own fever dream. So the 650B investment is poisoning the future well of returns.

For the quarter, Amazon is expected to report earnings per share (EPS) of $1.62 on revenue of $177.2 billion, according to Bloomberg analyst consensus estimates. The company saw earnings per share of $1.59 and revenue of $155.6 billion in Q1 last year.

Sure a revenue of 177.2 billion. but they are spending like they have a blank check. Eventually when that check clears will Amazon have enough in the bank to cover the check. When Returns on AI is only 15b the rate of return is much slower than the spending. They are building out now and hoping that the machine will have a return later or get bailed out in the end. We’ve heard this all before “too big to fail”. To any person that knows what that line means they just clenched their anus.

But in the end these calls will be interesting, If the earnings call shows a positive it shows that these companies are playing the shell game. Amazon is only getting 15b return per quarterly run shows that the math is flawed. To get that expensive back that will take 3.33 years, if Amazon stops investing today.

And the final flaw is , What if some other game changer comes out of a garage that has a home grown AI out of there garage that makes all these data centers look like nothing more than space heaters for towns. Deepseek has constantly outdone large llms for less than 5% of the money and that’s a secret that the hyperscalers hope you don’t see.

Anyways.. back to my morning coffee.

Quotes and attributions taken from: yahoo finance: Amazon Q1 earnings put the spotlight on AI spending and revenue

Post mortem: It would appear that all of the companies are posting strong numbers. How long this will last is the greatest question. Further is the biggest question. Metas numbers was the 8k layoff and 6k closed positions a part of there jump?

Every company beat there expectations, what does that mean to the little guy. Absolutely nothing. no lowered prices, it just means some CEO was able to light there cigar with a 100$ bill today.

Alphabet (GOOGL) $5.11 (Beat), Amazon (AMZN) $2.78 (Beat),Microsoft (MSFT) $4.13 (Beat), Meta (META) $6.71 (Est).

Let the market cannibalization begin – Meta fires 14k people…

In order to show some sort of profit, meta is firing 10000 people and closing 6000 open positions, This is biting off your arm to save your foot.

Meta said on Thursday it plans to lay off roughly 10% of its workforce, or about 8,000 people, the latest in a string of tech industry layoffs fueled in part by artificial intelligence.

The company is also closing around 6,000 open roles, Janelle Gale, Meta’s chief people officer, wrote in a memo published by Bloomberg that Meta confirmed to CNN.

This is insane. They are firing workers to replace with AI , the problem is AI can’t walk, it can’t improvise its position, and lastly without AI the only innovation they get is AI hallucination.

The company has also been splurging on talent for its superintelligence lab and has acquired buzzy AI startups like Moltbook and Manus as part of its ongoing efforts to compete with OpenAI and others.

The problem here is that in the past Meta had people working on all sorts of things. now they are doing a ground level overfocus and are putting all of the eggs into one basket and I feel like the payoff is not going to be what meta wants. Give or take companies are all jockeying for position to hit the innovation jackpot on AI. the problem is most of the companies that are playing the CAPEex game is doing it by scaling and not code.

Amazon said in January it would lay off 16,000 workers, its second large-scale layoffs in three months, emphasizing the need for efficiency. fintech firm Block’s announcement in February that it would lay off 40% of its workforce, more than 4,000 people, Meta CEO Mark Zuckerberg hinted at the start of this year that the company, which has invested heavily in AI, could see workforce changes because of the technology. On Meta’s January earnings call, he called 2026 “the year that AI starts to dramatically change the way that we work.”

“We’re starting to see projects that used to require big teams now be accomplished by a single very talented person,” Zuckerberg said.

Here’s my counter-point to this, You fired 16000 positions, if this person is supposedly replacing that many workers, what happens when your Very Talented Person gets sick? Or, a power outage? Or, LLM data loss. Now if your Very Talented Person gets sick your output goes from 14000 to … 1 , Whereas before one person gets sick your output goes from 14000 to 13999. And lets just say for instance the person that would of replaced your Very Talented Person makes an innovation that improves working by triple, you end up having your Replacement guys efficiency 13999 to 41,997.

Like many big tech companies, Meta eliminated tens of thousands of jobs in 2022 and 2023, reductions that were largely attributed to right-sizing after Covid-era spikes in usage and hiring. Last year, the company said it would cut about 5% of what it called its “lowest performers,” although it planned to backfill many of those roles.

This is not right-sizing, this is full on cannibalization, Everyone is jumping for the AI goldrush while some chinese man in his garage is laughing at the Cape-ex and deepseeking the best coffee ideas.

Attributions from: CNN.com Meta to cut 10% of staff as it pours billions into AI

Doctors are using AI and why I am ok with that to a degree…

CNN just posted an article and It was pointing out that AI is being used, The title of the article is

5 ways your doctor may be using AI chatbots — and why it matters

Specialized medical AI chatbots have quickly become a go-to source for many doctors and trainees. The CEO of one of these medical chatbot companies recently claimed that more than 100 million Americans were treated by a doctor who used their platform last year.

You know what. If the doctor is using AI to help diagnose an issue i am ok with this, But, if the doctor is using the AI as a replacement of his diagnostic I would be against that, the challenge with AI is using it in a way that is not a replacement of the doctors own agency.

One thing that should be majorly addressed here, is that the doctor should tell you right out how he is using the AI and what data is being used. If you are like me, you want to have a transparent doctor. I’ve explained my conditions to doctor and I see it ever time, Mid explaination his or hand falls down to the pocket level and you see in like martial arts the Sen no Sen its the move that you know he is getting his phone to google what you just told him or her. They will excuse themselves in the moment to go google my condition..

My normal reaction is .. to call the doctor right out, I tell them if you are going to google this do it in front of me and not to be embarrassed, I am the zebra in your career. I do not need the illusion of mastery of you are a doc, I personally want you to accept that you are not the god of your position and every instance as a doctor is a learning experience. I am not going to look down on a doctor that doesn’t know a rare genetic condition. I will look up to a doctor that uses the moment as a “Classroom” moment where he becomes the learner and I am the master. Because as far as doctor/patient this is the higher praise you can give to a doctor and it shows him as the “master” that he is willing to learn.

“ChatGPT is like your crazy uncle,” said Dr. Ida Sim, a professor at the University of California, San Francisco, who studies how to use data and technology to improve health care.

Any AI can be turned into your crazy uncle if you input enough information to them, but if doctors collaborate with the patient and the AI , I think a more diverse diagnosis would be made without the “symptom checker” fatigue that AI’s can load out on any Doctor,patient or third party can come up with.

As for AI’s they are not great doctors, they are the median doctor that is good at anything that slightly drifts from the center, they will be good for health upkeep or catching stuff before it happens. but on major issues the AI’s are so far in left field that they are irrelevant and become crazy unclue(pun intended) bob, That will start diagnosing diabetes before neuropathy in a chemical exposure case if context is done wrong.

The most common use case

Millions of research papers are published every year — and keeping up with them all is impossible.

“You’d need like 18 hours a day to stay up to date,” said Dr. Jared Dashevsky, a resident physician at the Icahn School of Medicine at Mount Sinai.

But doctors are expected to stay current on new research and guidelines to maintain their licenses. Many say they now use medical chatbots as a reference tool to help them stay updated.

Yes, there are millions of papers, but for Dr. Jared Dashevsky he doesn’t need to keep up with all of them. that would be insane. Millions of papers come out a year, by the end of said year 400,000 of those papers are changed or phased out into new research. Cnn and the doctor are wrong here, if you have a patient with a rare condition, AI can be used to contextualize the papers and come up with a mean average of the output to give the doctor a clue, I am not expecting the doctor to read all of the papers because he would rabbithole down so many roads that treatment and diagnostics would be a mess.

Save the papers in rare research for the specialist, your GP doesn’t need to know the ins and outs of 1 million papers that half of the time fail in the real world because lab controls do not equal real world observation. The Doctor that is slightly questioning his diagnosis and inputs some weird statistical drift will get a better answer out of AI and know what specialist to give the information too. But the doctor can use the AI as tool to faster make information available to him. If he tries the google search method it leads to bullshit that starts saying vitamins and sunning your butthole is a cure.

But many doctors use unauthorized chatbots called shadow AIs, according to doctors CNN spoke with. Some of these shadow AIs also advertise HIPAA compliance features.

HIPAA is a federal law that requires certain organizations that maintain identifiable health information — such as hospitals and insurers — to protect it from being disclosed without patient consent.

Here’s where doctors can win, Create a system that strips out all PII and just get to a processor that strips out the information and gets down to the numbers. Otherwise, the companies on the other end use the data as resaleable materials and ignore HIPAA , The healthcare entity should have an end to end chain of ownership to show the patient where there data begins and ends. the second and LLM user that data that is protected by HIPAA the LLM should be charged, if they sell it to insurance companies or walmart to figure out sales trends. I’m not saying AI should not be used , I’m saying accountability should be transparent.

We’ve been through this bullshit with the human genome with everyone attempting the copyrighting of the DNA of the human body, Now we are at the precipice with code of the human condition itself. We have Named Entity Recognition (NER) system to strip names and Privacy to ensure that even if the AI “learns” from your data, it cannot be reverse-engineered to identify you. We need this institutionalized across the system.

Otherwise we are creating a dangerous system that the human credit score will make it where insurance will have a value on a child before its born and create ways that have been used in the past to make people uninsurable.

GIve or take, Google Classroom makes Google a school admin, but also if you look in to common-core most people don’t realise its a job app to corporations across america. We do not need this to happen again. Common core in itself can feed LLM’s and Hippa issues since the IEP, typically the most powerful force for education can be identified later in life by LLM who are technical admins and further if the information from Common core and human condition meet you have an identifiable plot to unmasking the user. It could be connected the child who had suicidal ideations in school over low stress can be weighted and for a temporary issue , cause a person insurance to go through the roof.

Dr. Carolyn Kaufman — a resident physician at Stanford Medicine — and other doctors say that patient information is making its way into unauthorized chatbots, potentially opening the door to new ways of commodifying patient data.

“Data is money,” Kaufman said, noting that she has never uploaded HIPAA-protected information onto an unapproved chatbot. “If we’re just freely uploading those data into certain websites, then that’s obviously a risk for the individual patient and for the institution, as well.”

This statement here is a perfect reflection of above.. In the end, IEPS , common core assessments and more need to be Air gapped and when you leave school an agreement should be made between the student(or parents) on who can assess the information.

AI chatbots have also stepped in to help doctors draft summaries of patient visits and long hospital stays. These notes are viewable on online patient portals and help doctors track a patient’s course and communicate plans across the care team.

I am not worried here, If anything AI could be useful in suggestions to add to the file and give a treatment idea to the doctor, But no doctor should take this as gospel.

“From a med student perspective … you’re seeing a lot of things for the first time,” said Evan Patel, a fourth-year medical student at Rush University Medical College. “AI chatbots sort of help orient me to what possibilities it could be.”

Just No, First, fourth, or fortieth , Should never go in with AI , If you end with AI as a counterpoint or a co-researcher in the end is ok, but the doctor should not cognitively offload to the AI to help with diagnosis. Because if that becomes standard the cognitive process of diagnostics goes out the window and dies.

Med Students out of the gate should be regulated that AI is a non-negotiable in any point of the process before, during and any time patient contact is being made. At any time after if a Student uses AI after for a confirmation or a research Node, that can be agreed to but using the AI as attending physician is career suicide.

This preserves the Agency of the Physician and Occam’s razor.. The problem with AI is humans with 8.3 billion variations that AI tends to only use the mean average. It leaves many doctors with zebras that AI will hallucinate to high hell about and be dangerous.

The Final Word here. AI is Ok, but used correctly, not shoehorned into the medical spectrum..

Acknowledgements: Article from CNN.com 5 ways your doctor may be using AI chatbots — and why it matters

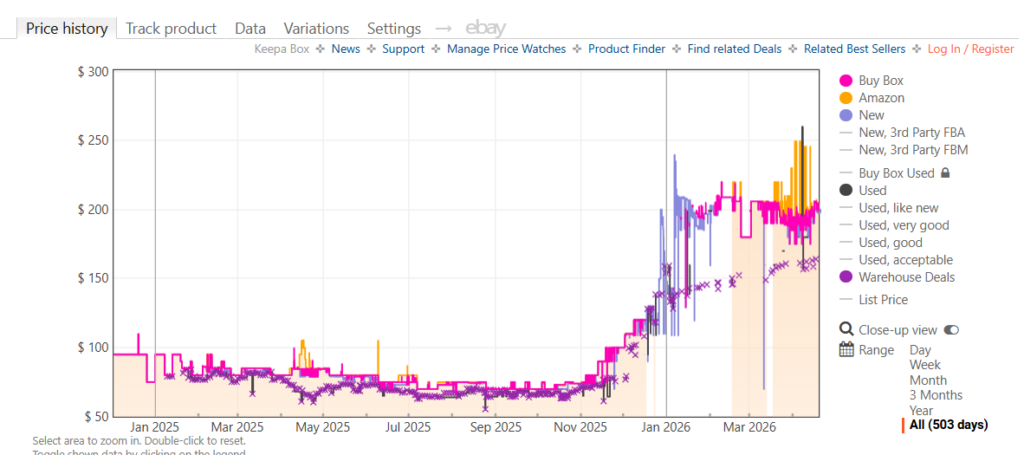

AI and how much it increases your costs.

This here is a tracked price of an SSD from amazon that clearly shows how much AI is driving the price up for every human in existence. to replace the SSD in a cheap computer now costs more than the cheap laptop or computer you bought in 2024. It eradicates any advantages in buying consoles and more.

Rufus is failing upward.

Rufus is burning through money Like a kid with a sparkler in a gunpowder factory.

Amazon CEO Jassy defends $200 billion AI spend: “We’re not going to be conservative”

With a $15 billion AI run rate, they might recoup their costs in 13.3 years. But lets be real, they are not counting the upward costs, They don’t account for the land cost, they don’t account for hardware failure. They are land rushing and hoping they hit it big. James Wilson Marshall is likely spinning in his grave.

This is a bad idea, most people do not realize that the Slopocalypse is happening. AI companies are starting for the exits because smoke is happening. Between Claude releasing its source code, Sora melting overnight.

Rufus is going to follow Sora and Claude , out of the 200 billion with the “lion’s share going toward AI development.” Horse shit, anyone who has used Rufus knows that rufus is a very basic AI that favors trying to get people to buy the most expensive products without going through your sales history.

In all honesty Rufus will be the litmus test for pushing bad AI, They are not a centralized platform like grok nor is the AI functional outside of being a bad salesman. Rufus does not do anything other than say “i see you are looking at socks how about these socks” as it shows a 50$ pair of socks. In the economy right now we can’t afford 50$ socks. “Independent audits from January 2026 show Rufus only matches the “objectively best” product 32% of the time”. When your basic function is to (sell product)..How are you are burning 200 Million on AI? Fuck that call Wendy’s and get there bad AI , its all the same. Rufus is a digital Vampire, It doesn’t do much but where do you think it is getting its training data. At this point lets all buy condoms to screw with the LLM learning data.

This is the 1849 goldrush for the 2026 era, as more people jump in to play the AI game, the corpses of other AI miners are dying fast. for every large AI that dies, Likely 100 more smaller ones die. The Slopocalypse is here, and while you could dig with your hands in the goldrush the 2026 game is pay to play. You need to have storage that is skyrocketing in cost, Land, and obscene amounts of power to run an AI farm while destroying everything around you. The AI Farms(mines) that fail are quickly bought up and run again until they fail too. What is likely happening as well is the AI farms that are failing are being bought up by bigger AI, the problem is THE ROI IS EXTREMELY NEGATIVE.

The idea of AI is nice on paper, but when culture is making AI SLOP videos of farts our trajectory is heading towards idiocracy. The biggest question is when slop is done is there any room for advancement or has the system inwardly corrupted itself into uselessness. The part that is failing in this industry is the scaling issue, if we need an answer to something and the answer is a hallucination, the fix is not to throw more processing power at it. It should come down to a code audit.

Because models are training on themselves the slop has become the AI’s TikTok doomscoll and with the Data of a shower thought fever dream being inputted into the AI the model now makes it real and therefore a training point. Google has put in its own immune system via SynthID.

Well, Given we are watching the Slopocalypsy in real time and people Slopocalypse coffeepots might cease to function, in the end it is we the consumers that are stuck with the bill because at the end of the day the bankrupt company walks away and the land rot infects the system.

I’m off to have a coffee from my smarter dumb coffee pot with buttons and switches and no internet connection, hopefully you do the same, its cheaper!

Final note: when your run numbers are 15b with a 200b expenditure, this is cleanroom spending. it does not account for failure and other associated costs.

Vizio tvs enshittification and why I won’t touch them.

Walmart has recently made move to require a walmart account on your Vizio and ONN branded Tv’s and its not over Watch metrics, its over how much data they can take from you. Its how much they can market your name and sell it off to other Entities. They claim it is a unification, Its not, it is walmart wants your living room, These TV’s likely have microphones in them and worse proximity sensor to know who’s in the room.

Beyond innovation, the results from Walmart and VIZIO are already clear for customers. 65% of surveyed Walmart customers report that CTV ads helped them discover new products3, underscoring the power of placing premium content in front of high-intent shoppers.

How the fuck did they flavor this question. They probably used a no win question where they gave 5 answers and gave people a no way out. something like “How much do Walmart ads on your VIZIO TV help you learn about new items? 1.Significantly 2. Somewhat 3. A little bit 4. Not much 5. Not at all.. They just flavored the poll to 4 yeses and a no. The poll is bullshit and likely people don’t know what they answered to. Because they will bias the outcome. But Walmart should not have the control over the TV they want. We bought Vizio Tv’s for the game room, for grandma when her tv dies. We dont want to buy a TV for grandma when it makes her able to click the interface and made the home shopping channel in one go because she was watching the big bang theory and walmart spawns and ad for notebooks on sale.

Roku does the injected ad Garbage. Even when you try to turn it off you find ads overlaid on other ads. Roku recently patented technology for “HDMI Customized Ad Insertion.” This allows the TV to monitor the HDMI port. Meaning when you have a monitor for a test while you are checking out someones test results while you are looking up someone’s health roku just made a hipaa violation. Privacy is now a subscription service, the price of getting the subscription is shopping at savers and finding old tv’s that don’t record you while you take a shit. The problem is Walmart is the granddaddy of data from customers , they are the place the FBI turns to when they cant answer a question. they made the reason facebook knows you jerk off because they are reading your watches and phones sensors while you sent memes an hour ago to your work buddy.

The sad part is walmart just leaped over facebooks tracking. Because walmart just stepped up the game. They will probe your phone via the Vizio, Than when you hit the store they will have a tracking Tag that probes your phone again and if you go for the product that was on TV they have your home address, they have your means of payment. they have everything. This needs to stop. With this if you stop to long in an isle walmart will hallucinate that you are buying Plan B while you are pregnant because you dodged out of an isle to talk about something important. In states where birth control is frowned on you could be arrested.

My answer to this as much as I hate it, Buy a Firestick or a google device. Amazon uses Sidewalk, But you can turn that shit off. They are geofenced, they go no further than your TV. They have more regulatory rules to stop them from doing what walmart wants to do. If it is discovered that either them are taking information beyond the boundaries than you know to stop it . If that Vizio or ONN tv refuses to setup without an account, return the TV as a defective product. Make a report to the FCC that the TV is refusing to let you see OTA TV. Under the Telecommunications Act of 1996, specifically the OTARD (Over-the-Air Reception Devices) rule, manufacturers cannot place “unreasonable restrictions” that impair the use of antennas for video programming. There are people out there without internet still and imagine nursing homes being TV locked because no internet.

But honestly at this point I am almost ready to say if you buy a cheap TV , use a cheap Chinese brand, They will track you but the end game what is a Chinese man going to do if you talk about farts with a friend for 45 minutes.

Walmart is creating a surveillance state that the US government is dying for, now if walmart follows through they dont have to do it. Worse yet if Walmart cheats and says “you need the Vizio APP to set up the TV” congrats you have given walmart your location down to the foot.

It’s bad enough in the generation of smart Devices that you need a SCIF to talk about stuff that is under NDA or court injunction because of a divorce or other means. We as the consumers need to place boundaries. we go to the store to get products, we don’t need to spied on at home because we ran out of coffee.

Also, You know all of this is going to further enshitified by AI , The fact you know these TV’s are going to be taking private data, the second you replay the BLURAY/DVD of your child’s birth congratulations, your or your significant others Vagina is on the internet.

Anyways, if you buy a TV read the TOS , privacy policy and be safe. otherwise the assholes win. I need a coffee now…

Is amazon ok?

Amazon has been weird lately, Just a bunch of things I have noticed .

For one amazon prime Video is getting weird. Not sure if this applies to everyone but, Watch a TV show on amazon… Rather than go to the next episode It returns you to the Amazon Prime Video screen, I thought it might of been a fluke in the amazon app. I tried the same thing on my samsung TV , Same thing happened! Loaded up a brand new firestick that i have for media sharing Same problem?

This alone may not have anyone going Hmmm but there are other things Amazon Resale has people complaining because of people order X and get Y. Other items are shipped and people are getting refunds and told to dispose of Item.

They also are heavily leveraged into AI and their Rufus is dumber than a brick. They are putting hundreds of billions into AI and are hemorrhaging money for this.. They are trying so bad to be next Facebook in a way by being Big Data and they do not know how to do it . Costs for their AI outwiegh there standard app approach. The way it is going Jeff Bezos might have to use low grade fuel for his plane.

Amazon is also hiding reviews from normal users and instead show compensated reviews. Compensated reviews are garbage because the only way to keep getting items is give a glowing review. I’d take 10 Honest reviews over a single review where the person received the item for free.. They are so badly losing their way that they are bleeding long time customers turned off by this approach. Amazon probably knows they are losing a small percentage of customers. They are over leveraged and I am willing to bet they dont know how to get back . but even month to month if they lose 0.25% customers , You don’t have a leak you have a crisis , because even in that small percentage they are losing “whales” people that are big spenders.

If you go by this metric it works out to big money and the more that leave would be catastrophic.

| Metric | Regular Member | The “Whale” (Top Tier) |

| Est. Annual Spend | $1,400 | $12,000+ (Business/Bulk) |

| 0.25% Monthly Churn | 625,000 users / month | ~6,250 high-spenders / month |

| Annualized Users Lost | 7.5 Million Users | 75,000 “Whales” |

| Direct Revenue Loss | $10.5 Billion / year | $900 Million / year |

| Subscription Fee Loss | $1.3 Billion (at $179/yr) | (Included in revenue) |

So right now Amazon is using an enshitification phase while they try to bet the house on Rufus. Rufus has the tact of getting a pickaxe to the skull. If you ask google it gives you what you want while Rufus throws shit at you and goes HERE THIS IS WHAT YOU BUY NOW! At a guess Amazon knows they are bleeding but they are so committed to their act that they are reducing everything just to keep the ship running. So piss away customers and hope they can profit. if amazon had incrimented the changes rather than try to use a grenade to empty a bucket people may have tolerated this. But even marketwise they are pissing away money , The stock is down 8.18% in the last 6 months.

While amazon serves better as the general store they are trying to get into a neiche market and they have no fucking clue how to do it because they seem to be trying a what can we replace while not realizing th e limitations on the new.

They’ve replaced the general store with a high-stakes AI experiment, and right now, the experiment is blowing up in everyones face like wile E coyote trying to catch a profitable roadrunner.

Anyways, back to my coffee after this steamy thought.